This is part 5 of a series of tutorials, in which we develop the mathematical and algorithmic underpinnings of deep neural networks from scratch and implement our own neural network library in Python, mimicing the TensorFlow API. Start with the first part: I: Computational Graphs.

- Part I: Computational Graphs

- Part II: Perceptrons

- Part III: Training criterion

- Part IV: Gradient Descent and Backpropagation

- Part V: Multi-Layer Perceptrons

- Part VI: TensorFlow

Multi-layer perceptrons

Motivation

Many real-world classes that we encounter in machine learning are not linearly separable. This means that there does not exist any line with all the points of the first class on one side of the line and all the points of the other class on the other side. Let’s illustrate this with an example.

As we can see, it is impossible to draw a line that separates the blue points from the red points. Instead, our decision boundary has to have a rather complex shape. This is where multi-layer perceptrons come into play: They allow us to train a decision boundary of a more complex shape than a straight line.

Computational graph

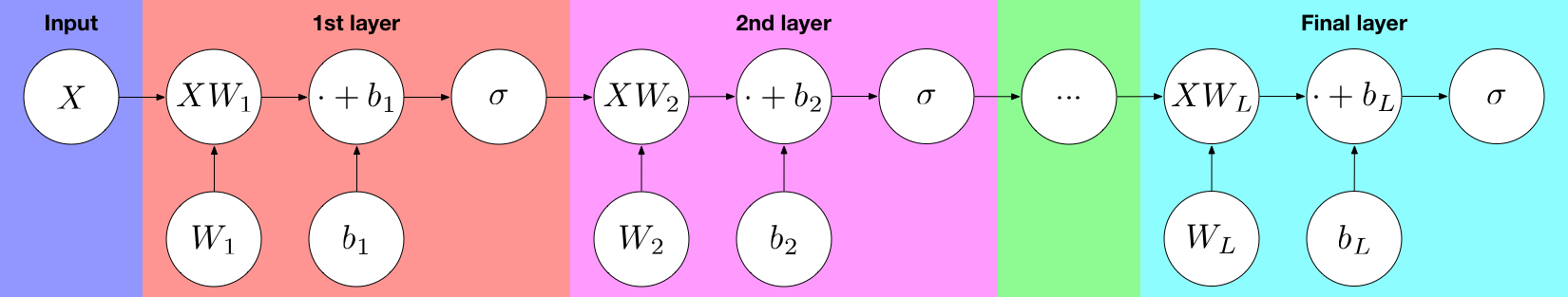

As their name suggests, multi-layer perceptrons (MLPs) are composed of multiple perceptrons stacked one after the other in a layer-wise fashion. Let’s look at a visualization of the computational graph:

As we can see, the input is fed into the first layer, which is a multidimensional perceptron with a weight matrix $W_1$ and bias vector $b_1$. The output of that layer is then fed into second layer, which is again a perceptron with another weight matrix $W_2$ and bias vector $b_2$. This process continues for every of the $L$ layers until we reach the output layer. We refer to the last layer as the output layer and to every other layer as a hidden layer.

an MLP with one hidden layers computes the function

$$\sigma(\sigma(X \, W_1 + b_1) W_2 + b_2) \,,$$

an MLP with two hidden layers computes the function

$$\sigma(\sigma(\sigma(X \, W_1 + b_1) W_2 + b_2) \, W_3 \,,$$

and, generally, an MLP with $L-1$ hidden layers computes the function

$$\sigma(\sigma( \cdots \sigma(\sigma(X \, W_1 + b_1) W_2 + b_2) \cdots) \, W_L + b_L) \,.$$

Implementation

Using the library we have built, we can now easily implement multi-layer perceptrons without further work.

As we can see, we have learned a rather complex decision boundary. If we use more layers, the decision boundary can become arbitrarily complex, allowing us to learn classification patterns that are impossible to spot by a human being, especially in higher dimensions.

Recap

Congratulations on making it this far! You have learned the foundations of building neural networks from scratch, and in contrast to most machine learning practitioners, you now know how it all works under the hood and why it is done the way it is done.

Let’s recap what we have learned. We started out by considering computational graphs in general, and we saw how to build them and how to compute their output. We then moved on to describe perceptrons, which are linear classifiers that assign a probability to each output class by squashing the output of $w^Tx+b$ through a sigmoid (or softmax, in the case of multiple classes). Following that, we saw how to judge how good a classifier is – via a loss function, the cross-entropy loss, the minimization of which is equivalent to maximum likelihood. In the next step, we saw how to minimize the loss via gradient descent: By iteratively stepping into the direction of the negative gradient. We then introduced backpropagation as a means of computing the derivative of the loss with respect to each node by performing a breadth-first search and multiplying according to the chain rule. We used all that we’ve learned to train a good linear classifier for the red/blue example dataset. Finally, we learned about multi-layer perceptrons as a means of learning non-linear decision boundaries, implemented an MLP with one hidden layer and successfully trained it on a non-linearly-separable dataset.

Next steps

The upcoming sections will be focused on providing hands-on experience in neural network training. Continue with the next part: VI: TensorFlow

modded your code abit to allow me to input my own data with clicks

right click-blue

left click-red

middle click-start optimization

https://gist.github.com/soulslicer/4459fb456cc37454eb7b5791011f4e6f

Great, thanks. Could you please modify the code to point to the original blog post, rather than to the about-me page?

Thanks for the great resource! I’m learning a lot from it 🙂

Sorry if I’m mistaken, but is there a chance that the code for generating the red and blue points is accidentally the same as the single-layer perceptron?

It seems to me that the code

# Create red points centered at (-2, -2)

red_points = np.random.randn(50, 2) – 2*np.ones((50, 2))

# Create blue points centered at (2, 2)

blue_points = np.random.randn(50, 2) + 2*np.ones((50, 2))

Produces a linearly separable set of points, and doesn’t resemble your plot.

Indeed, this code produce something like that: https://uploads.disquscdn.com/images/765c4fc3860256fcd2bfd8af7a509c19a8e420ede28ca92753aaba231b738b82.png

Replace it with this:

# Create two clusters of red points centered at (0, 0) and (1, 1), respectively.

red_points = np.concatenate((

0.2*np.random.randn(25, 2) + np.array([[0, 0]]*25),

0.2*np.random.randn(25, 2) + np.array([[1, 1]]*25)

))

# Create two clusters of blue points centered at (0, 1) and (1, 0), respectively.

blue_points = np.concatenate((

0.2*np.random.randn(25, 2) + np.array([[0, 1]]*25),

0.2*np.random.randn(25, 2) + np.array([[1, 0]]*25)

))

You’re right! I accidentally embedded the wrong code cell. It is now fixed.

The series of posts are really good and I am going through them trying to absorb and implement as much as possible.

One question about the multi-layer perceptron. Here we are only considering one perceptron per layer, is that right?